Businesses generate large volumes of voice data from calls, meetings, and voice interfaces, but manually processing this data is slow and difficult to scale.

Speech recognition (also called automatic speech recognition or speech-to-text) converts spoken language into text, enabling systems to analyze and automate voice-based workflows such as call transcription, voice assistants, and meeting summaries.

Exploring how speech recognition works, the algorithms involved, its applications in various industries, and real-life examples.

12 speech recognition use cases

Speech recognition is used in many industries to convert spoken language into text and enable voice-based interactions with systems. The following examples show common speech recognition use cases across sectors such as customer service, sales, automotive, healthcare, and technology.

Customer service and support

- Interactive Voice Response (IVR) systems: IVR systems automatically route callers to the appropriate department by recognizing spoken queries. They reduce call volumes and wait times by handling simple requests using pre-recorded responses or text-to-speech systems. Automatic Speech Recognition (ASR) enables IVR systems to understand and respond to customer inquiries in real time.

- Customer support automation and chatbots: Speech recognition enables voice-based chatbots and virtual assistants to handle routine customer service requests such as answering FAQs, guiding troubleshooting steps, and assisting with account inquiries.

- Sentiment analysis and call monitoring: Sentiment analysis classifies conversations as positive, negative, or neutral, helping organizations monitor service quality and identify customer concerns.

- Multilingual support: Speech recognition models can be trained to recognize multiple languages. When integrated into chatbots or IVR systems, they can detect the user’s language and switch to the appropriate model, helping organizations serve international customers (see Figure 1).

- Customer authentication with voice biometrics: Voice biometrics use speech recognition technologies to analyze a speaker’s voice and extract features such as accent and speed to verify their identity.

Figure 1: Image showing how a multilingual chatbot recognizes words in another language.

Sales and arketing

- Virtual sales assistants: AI-powered sales assistants interact with customers through voice and help guide purchasing decisions. Speech recognition allows these systems to understand spoken requests and respond based on customer intent.

- Transcription services: Speech recognition converts recordings of sales calls and meetings into written transcripts, enabling easier documentation and analysis.

Automotive

- Voice-activated controls: Voice-activated controls allow users to interact with devices and applications using voice commands. Drivers can operate features like climate control, phone calls, or navigation systems.

- Voice-assisted navigation: Voice-assisted navigation provides real-time voice-guided directions by utilizing the driver’s voice input for the destination. Drivers can request real-time traffic updates or search for nearby points of interest using voice commands without physical controls.

Healthcare

- Medical transcription: Medical transcription, also known as MT, is the process of converting voice-recorded medical reports into a written text document. The following are the main steps in the medical transcription process:

- Recording the physician’s dictation.

- Transcribing speech into text using speech recognition systems (some systems also include speaker diarization to distinguish between speakers).

- Editing the transcribed text for better accuracy and correcting errors as needed.

- Formatting the document in accordance with legal and medical requirements.

- Virtual medical assistants: Virtual medical assistants (VMAs) use speech recognition, natural language processing, and machine learning algorithms to communicate with patients through voice or text. Speech recognition software allows VMAs to respond to voice commands, retrieve information from electronic health records (EHRs) and automate the medical transcription process.

- Electronic Health Records (EHR) integration: Healthcare professionals can use voice commands to navigate the EHR system, access patient data, and enter data into specific fields.

Speech recognition real-life examples

Azure Speech

Azure Speech is a cloud-based AI service from Microsoft (part of Azure AI Foundry tools) that enables applications to process and generate spoken language. It provides capabilities such as:

Speech-to-text (automatic speech recognition): Converts spoken audio into written text with support for multiple transcription modes:

- Real-time transcription for streaming audio

- Fast transcription for recorded files

- Batch transcription for large volumes of audio

Developers can also create custom speech models to improve recognition accuracy for domain-specific vocabulary or noisy environments.

Text-to-speech (speech synthesis): Transforms written text into natural-sounding audio using neural voices. Developers can control voice characteristics such as pitch, speed, and pronunciation using Speech Synthesis Markup Language (SSML).

Azure Speech also supports custom neural voices, allowing organizations to create a unique voice for their applications.

Speech translation: Provides real-time multilingual speech translation, enabling speech-to-speech or speech-to-text translation in different languages.

Custom speech models: Developers can train custom models with their own data to improve recognition for:

- Industry-specific terminology

- Accents and speaking styles

- Noisy audio conditions

Voice avatars and conversational AI: Azure Speech can generate synthetic talking avatars and enable real-time voice interactions, supporting conversational AI systems and voice agents.

Figure 2: An example from Azure Voice AI agent, Voice Live.1

Deepgram

Deepgram provides APIs for integrating speech capabilities, such as speech-to-text transcription, text-to-speech synthesis, and voice intelligence.2

- Speech-to-text transcription: Converts audio into text for both real-time streaming and prerecorded audio.

- Text-to-speech: Generates natural-sounding speech from text for voice interfaces and assistants.

- Speaker diarization: Identifies and separates different speakers in an audio recording.

- Keyword detection and audio intelligence: Detects specific words or phrases and extracts insights from audio data.

- Custom speech models: Allows organizations to improve recognition accuracy using domain-specific data.

Deepgram’s use cases include:

- Customer service: Transcribing and analyzing call center conversations to monitor service quality and extract insights.

- Media and broadcasting: Generating captions and transcripts for podcasts, interviews, and live streams.

- Healthcare and legal: Converting spoken dictation and conversations into written documentation.

- Business analytics: Extracting keywords, sentiment, and insights from large volumes of audio data.

AssemblyAI

AssemblyAI is used in call center analytics, where customer support calls are transcribed and analyzed for quality monitoring and insights; meeting transcription, which generates transcripts and summaries of virtual meetings; and media transcription, enabling captions, transcripts, and searchable audio or video content.

It is also used for content moderation to detect inappropriate or restricted speech in audio streams and for voice data analytics, extracting information such as topics, entities, and sentiment from large volumes of recorded conversations.3

- Speech-to-text transcription: Converts audio streams or files into text with timestamps, confidence scores, and other metadata.

- Real-time streaming transcription: Processes live audio with low latency for voice agents and real-time applications.

- Audio intelligence: Extracts insights from speech, including speaker diarization, sentiment analysis, topic detection, and entity recognition.

- Summarization and speech understanding: Generates summaries and structured outputs from transcripts to support downstream workflows.

- Content moderation and PII redaction: Identifies or removes sensitive or inappropriate content from audio.

- Multilingual and language detection capabilities: Supports transcription across multiple languages and accents.

Google Cloud Speech-to-Text

Google Cloud Speech-to-Text allows developers integrate the API to transcribe audio files, process live speech streams, and build voice-enabled features such as commands or search.4

- Real-time and batch transcription: Transcribes both streaming audio and prerecorded files.

- Multilingual support: Recognizes speech in more than 100 languages and variants.

- Advanced speech AI models: Uses Google’s speech models (e.g., Chirp 3) trained on large audio datasets for improved accuracy.

- Chirp 3 is Google’s latest speech AI model for automatic speech recognition (ASR). It is a multilingual generative model designed to convert spoken audio into text with higher accuracy and speed. The model improves transcription quality and supports features such as speaker diarization (identifying different speakers), automatic language detection, and multilingual speech recognition.

- Automatic punctuation and speaker features: Adds punctuation to transcripts and can distinguish between speakers in recordings.

What is speech recognition?

Speech recognition, also known as automatic speech recognition (ASR), speech-to-text (STT), and computer speech recognition, is a technology that enables a computer to recognize and convert spoken language into text.

Speech recognition technology uses AI and machine learning models to accurately identify and transcribe different accents, dialects, and speech patterns.

Speech recognition vs voice recognition

Speech recognition is commonly confused with voice recognition, yet, they refer to distinct concepts. Speech recognition converts spoken words into written text, focusing on identifying the words and sentences spoken by a user, regardless of the speaker’s identity.

On the other hand, voice recognition is concerned with recognizing or verifying a speaker’s voice, aiming to determine the identity of an unknown speaker rather than focusing on understanding the content of the speech.

What are the features of speech recognition systems?

Speech recognition systems have several components that work together to understand and process human speech. Key features of effective speech recognition are:

Audio preprocessing

After you have obtained the raw audio signal from an input device, you need to preprocess it to improve the quality of the speech input. The main goal of audio preprocessing is to capture relevant speech data by removing any unwanted artifacts and reducing noise.

Feature extraction

This stage converts the preprocessed audio signal into a more informative representation. This makes raw audio data more manageable for machine learning models in speech recognition systems.

Language model weighting

Language weighting gives more weight to certain words and phrases, such as product references, in audio and voice signals. This makes those keywords more likely to be recognized in a subsequent speech by speech recognition systems.

Acoustic modeling

It enables speech recognizers to capture and distinguish phonetic units within a speech signal. Acoustic models are trained on large datasets containing speech samples from a diverse set of speakers with different accents, speaking styles, and backgrounds.

Speaker labeling

It enables speech recognition applications to determine the identities of multiple speakers in an audio recording. It assigns unique labels to each speaker in an audio recording, allowing the identification of which speaker was speaking at any given time.

Profanity filtering

The process of removing offensive, inappropriate, or explicit words or phrases from audio data.

What are the different speech recognition algorithms?

Speech recognition uses various algorithms and computational techniques to convert spoken language into written language. The following are some of the most commonly used speech recognition methods:

Hidden Markov Models (HMMs)

Hidden Markov model is a statistical Markov model commonly used in traditional speech recognition systems. HMMs capture the relationship between the acoustic features and model the temporal dynamics of speech signals.

Natural language processing (NLP)

NLP is a subfield of artificial intelligence that focuses on the interaction between humans and machines through natural language. Some of the key roles of NLP in speech recognition systems:

- Estimate the probability of word sequences in the recognized text

- Convert colloquial expressions and abbreviations in a spoken language into a standard written form

- Map phonetic units obtained from acoustic models to their corresponding words in the target language.

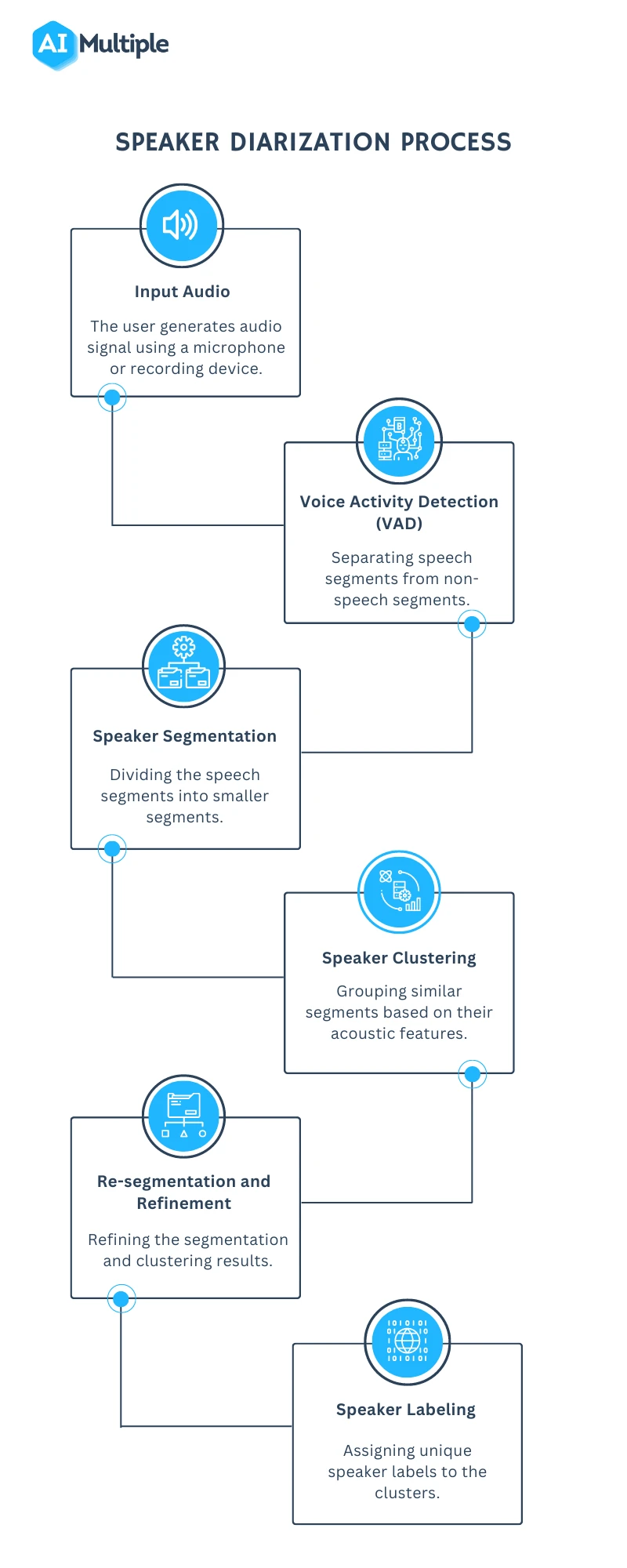

Speaker Diarization (SD)

Speaker diarization, or speaker labeling, is the process of identifying and attributing speech segments to their respective speakers (Figure 1). It allows for speaker-specific voice recognition and the identification of individuals in a conversation.

Figure 3: A flowchart illustrating the speaker diarization process

Dynamic Time Warping (DTW)

Speech recognition algorithms use the Dynamic Time Warping (DTW) algorithm to find an optimal alignment between two sequences (Figure 4).

Figure 4: A speech recognizer using dynamic time warping to determine the optimal distance between elements.5

Deep neural networks

Neural networks process and transform input data by simulating the non-linear frequency perception of the human auditory system.

Connectionist Temporal Classification (CTC)

It is a training objective introduced by Alex Graves in 2006. CTC is especially useful for sequence labeling tasks and end-to-end speech recognition systems. It allows the neural network to discover the relationship between input frames and align input frames with output labels.

What are the challenges of speech recognition?

While speech recognition technology offers many benefits, it still faces a number of challenges that need to be addressed. Some of the main limitations of speech recognition include:

Acoustic challenges

Accents and dialects

Accents and dialects differ in pronunciation, vocabulary, and grammar, making it difficult for speech recognition applications to recognize speech accurately.

Assume a speech recognition model has been primarily trained on American English accents. If a speaker with a strong Scottish accent uses the system, they may encounter difficulties due to pronunciation differences. For example, the word “water” is pronounced differently in both accents. If the system is not familiar with this pronunciation, it may struggle to recognize the word “water.”

Solution: Addressing these challenges is crucial to enhancing speech recognition applications’ accuracy. To overcome pronunciation variations, it is essential to expand the training data to include samples from speakers with diverse accents. This approach helps the system recognize and understand a broader range of speech patterns.

Background noise

Background noise (e.g., traffic, cross-talk) makes distinguishing speech from background noise difficult for speech recognition applications (see Figure 5).

Solution: Pre-processing techniques can be used to reduce background noise in speech recognition, which can help improve the performance of speech recognition models in noisy environments.

For instance, you can use data augmentation techniques to reduce the impact of noise on audio data. Data augmentation helps train speech recognition models with noisy data to improve model accuracy in real-world environments.

Figure 5: Examples of a target sentence (“The clown had a funny face”) in the background noise of babble, car and rain.6

Linguistic challenges

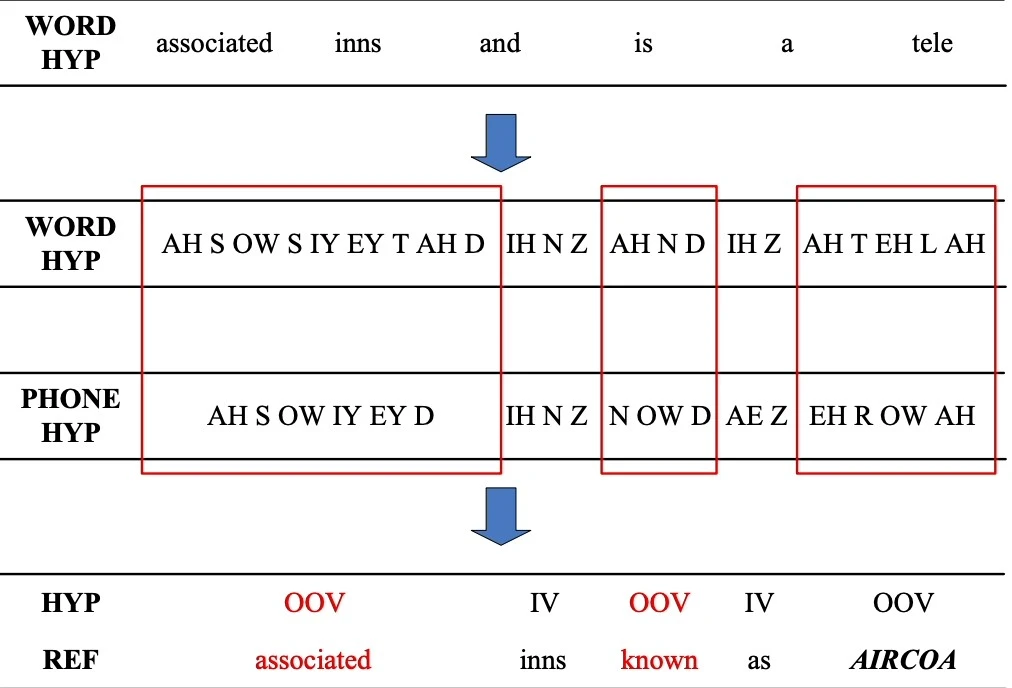

Out-of-vocabulary words

Since the speech recognizer model has not been trained on OOV words, it may incorrectly recognize them as different or fail to transcribe them when encountering them.

Figure 6: An example of detecting an OOV word.

Solution: Word Error Rate (WER) is a common metric that is used to measure the accuracy of a speech recognition or machine translation system. The word error rate can be computed as:

Figure 7: Demonstrating how to calculate word error rate (WER).7

Homophones

Homophones are words that are pronounced identically but have different meanings, such as “to,” “too,” and “two”.

Solution: Semantic analysis allows speech recognition programs to select the appropriate homophone based on its intended meaning in a given context. Addressing homophones improves the ability of the speech recognition process to understand and transcribe spoken words accurately.

Technical/system challenges

Data privacy and security

Speech recognition systems involve processing and storing sensitive and personal information, such as financial information. An unauthorized party could use the captured information, leading to privacy breaches.

Solution: You can encrypt sensitive and personal audio information transmitted between the user’s device and the speech recognition software. Another technique for addressing data privacy and security in speech recognition systems is data masking. Data masking algorithms mask and replace sensitive speech data with structurally identical but acoustically different data.

Figure 8: An example of how data masking works.

Limited training data

Limited training data directly impacts the performance of speech recognition software. With insufficient training data, the speech recognition model may struggle to generalize different accents or recognize less common words.

Solution: To improve the quality and quantity of training data, you can expand the existing dataset using data augmentation and synthetic data generation technologies.

Be the first to comment

Your email address will not be published. All fields are required.